Managing Adverse Event Reviews: Medical Monitor’s Essential Guide

Medical Monitor AE review is where patient safety, regulatory defensibility, and trial credibility collide—fast. The job isn’t to “approve narratives.” It’s to detect true safety signals early, prevent classification drift, enforce consistent causality/seriousness decisions, and make sure every SAE becomes a clean, inspection-proof story supported by source, timeline, and rationale. When AE reviews are weak, trials bleed time in queries, misreport events, trigger avoidable CAPAs, and risk inspection findings. This guide gives Medical Monitors an operational playbook: triage logic, review checklists, escalation thresholds, and documentation patterns that keep sponsors, sites, and regulators aligned.

1. The Medical Monitor’s AE Review Mission: Safety Signal Control + Defensible Decisions

A Medical Monitor’s AE review function is a quality system, not a clinical opinion hotline. If your review process cannot survive an inspection replay, it is not complete. That means every decision you make—seriousness, expectedness, relatedness, outcome, action taken, and follow-up needs—must map to a traceable evidence chain that fits trial mechanics and safety reporting timelines.

Your work sits upstream of pharmacovigilance outputs like drug safety reporting timelines and regulatory requirements, downstream of site documentation quality shaped by adverse events (AEs): identification, reporting, and management, and tightly coupled to process discipline covered in essential adverse event reporting techniques for CRCs. If these layers don’t align, you’ll see predictable failure modes: late SAE awareness, “not clinically significant” language masking real severity, missing stop dates, fuzzy causality rationale, and inconsistent coding patterns that sabotage aggregate analyses.

A strong Medical Monitor also understands the trial governance around safety oversight: how a Data Monitoring Committee (DMC) functions, why patient safety oversight is a PI duty, and how site-level behaviors described in adverse event handling: essential PI guidelines affect the quality of what lands in your queue.

Where Medical Monitors get crushed is not volume—it’s ambiguity. Ambiguity comes from incomplete narratives, missing chronology, protocol deviations, and unclear endpoint rules. That’s why Medical Monitor AE review must be integrated with protocol clarity from clinical trial protocol management responsibilities and the master framework in clinical trial protocol: definitive guide with examples. If the protocol defines what constitutes an event of special interest, a stopping rule, or an endpoint-adjacent event, your review must enforce it consistently—or your safety dataset becomes scientifically noisy and regulator-hostile.

2. Building a Medical Monitor AE Review Workflow That Prevents Late Reporting and Rework

The biggest AE review pain point is time wasted on preventable follow-ups. Most follow-ups come from the same missing pieces: incomplete chronology, unclear seriousness criteria, absent causality reasoning, and missing objective corroboration. A Medical Monitor’s job is to create a workflow that forces completeness early.

Step 1: Start with triage, not full narrative polishing

Not all events deserve equal time. Use a triage filter that immediately flags:

Serious AEs and borderline seriousness scenarios (e.g., ED visit, hospitalization “for observation,” prolonged disability questions)

AESIs and protocol-defined special interest categories tied to the clinical trial protocol

Events with potential impact on endpoints defined in primary vs secondary endpoints

Potential unblinding risk described in blinding in clinical trials

This triage reduces the classic failure pattern where teams spend time perfecting low-risk narratives while a time-sensitive SAE is still missing the first awareness timestamp required to meet drug safety reporting timelines.

Step 2: Enforce the “timeline spine” for every case

Every AE review should begin with a strict timeline backbone:

symptom onset date/time (or best estimate)

first awareness by site staff

first awareness by sponsor/CRO/PV (as applicable)

escalation timestamp

hospitalization/diagnostic dates

action taken with IP and the date it occurred

resolution date or last known status

If your narratives don’t consistently include this spine, your safety program becomes fragile, especially when building outputs like aggregate reports in pharmacovigilance and regulatory submissions in pharmacovigilance.

Step 3: Standardize causality reasoning so it doesn’t “drift by personality”

Causality decisions often vary based on individual style. That inconsistency creates regulator suspicion and undermines signal detection. To fix it, require causality rationale to explicitly address:

temporal relationship (dose timing vs onset)

biologic plausibility/mechanism

alternative etiologies (comorbidities, infections, concomitant meds)

dechallenge/rechallenge if applicable

objective evidence supporting the conclusion

This ties directly to the practical safety culture described in what is pharmacovigilance and strengthens alignment with site behaviors outlined in adverse event reporting techniques for CRCs and AE handling guidelines for PIs.

Step 4: Integrate monitoring and audit readiness

Your AE review decisions must survive CRA scrutiny and sponsor audits. If a CRA can’t reconcile the AE narrative with source and CRF, you’ll generate query storms and future findings. Medical Monitors should routinely cross-align their expectations with CRA documentation techniques and inspection patterns described in clinical trial auditing and inspection readiness. The goal is a single story across source → CRF → safety database → narrative → submission.

3. Medical Monitor AE Review Playbook: Triage, Follow-Up, Coding, and Signal Escalation

This is the operational core. If you implement the following playbook consistently, you’ll cut rework, improve timeliness, and increase signal sensitivity without drowning the team.

A) Triage rules that protect time-sensitive obligations

Use “if-then” triage to force urgency where it belongs:

If there is any hospitalization, ED visit, life-threatening language, or death mention → treat as SAE until proven otherwise, reconcile seriousness criteria with objective documentation, and align with AE identification/reporting fundamentals.

If the event plausibly relates to investigational product or mechanism → require explicit causality reasoning and dechallenge logic, and consider how it impacts aggregate safety reports.

If the event could affect endpoints, eligibility, or protocol-defined stopping criteria → cross-check the protocol guide and endpoint definitions like primary vs secondary endpoints.

These rules prevent the “administrative first” approach where the team spends time harmonizing wording while missing the reporting clock defined in drug safety reporting timelines.

B) Follow-up question design that eliminates looped queries

Most follow-ups fail because they ask “Please provide more details” instead of asking targeted questions that close a specific decision gap. Your follow-up template should include:

Chronology: onset date/time, awareness date/time, intervention timeline

Objective severity evidence: vitals, labs, imaging, clinician impression

Outcome and status: resolved date, ongoing status, sequelae

Action taken: IP held/restarted/discontinued; treatment given

Causality rationale: why related/unrelated with alternatives

This approach aligns with how sites are trained in CRC adverse event reporting techniques and reduces downstream conflict with monitoring teams focused on trial documentation quality.

C) Coding sanity checks that protect your aggregate signal

You don’t need to be the MedDRA coder to enforce coding quality. You do need to protect clinical meaning. Two high-impact checks:

Does the coded term match the narrative?

Is the term too broad or too specific given evidence?

If coding is sloppy, your aggregate signal becomes junk, and your outputs in pharmacovigilance reporting and regulatory PV submissions become harder to defend.

D) Signal detection: convert case review into trend intelligence

Medical Monitors often review case-by-case and forget to build trend memory. Create a watchlist by:

syndrome/clinical theme

timing relative to dosing

site clustering

severity escalation patterns

outcome patterns and treatment requirements

Then connect this watchlist into independent oversight pathways like DMC roles in trials. Your goal is not to “prove causality” early—your goal is to surface credible signals early enough to manage risk.

What is your biggest Medical Monitor AE review bottleneck?

Pick one. Each option maps to a specific fix strategy.

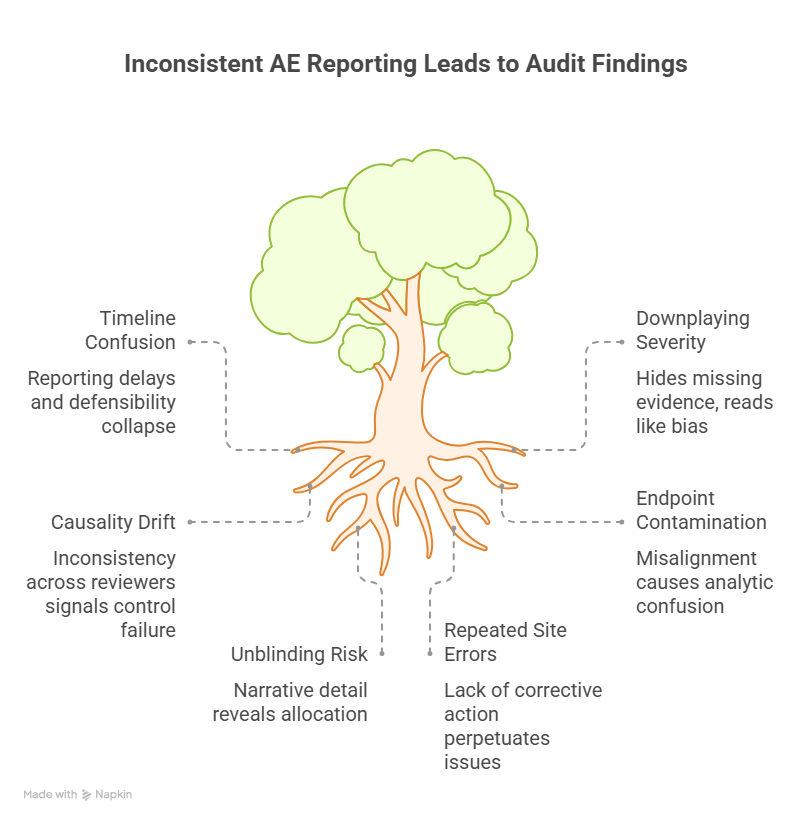

4. Common Failure Modes in AE Reviews That Trigger Findings (and How Medical Monitors Stop Them)

If you want to prevent audit pain, focus on the failure modes that reliably produce findings, delays, and distrust. These are the patterns that get trials in trouble—not the rare edge cases.

1) Timeline confusion that breaks reporting compliance

The reporting clock frequently starts at first awareness, not when the team “confirmed details.” When sites confuse onset with awareness or delay escalation pending diagnostics, reporting becomes late and defensibility collapses. Medical Monitors should enforce “escalate first, complete later,” aligned with drug safety reporting requirements and training expectations from AE reporting techniques for CRCs.

2) Downplaying severity with vague language

Phrases like “not clinically significant” often hide missing evidence. That wording becomes toxic during inspections because it reads like bias. Replace minimization with objective facts. If the evidence is missing, demand it. This protects the study narrative and the PI’s oversight role described in patient safety oversight guidance for PIs.

3) Causality drift and inconsistency across reviewers

When one reviewer says “possibly related” and another says “unrelated” for similar cases without a clean rationale, regulators interpret it as control failure. Fix it by enforcing structured causality logic and requiring justification for any change across follow-ups. This supports coherent downstream outputs like aggregate PV reports.

4) Endpoint contamination and protocol misalignment

Events that overlap endpoints must be handled consistently. A cardiovascular event might be both a safety event and an efficacy endpoint depending on design. Misalignment between AE handling, endpoint definitions, and the protocol framework causes analytic confusion and query storms.

5) Unblinding risk through narrative detail

AE narratives can accidentally reveal allocation through lab patterns, dosing details, or side-effect phrasing. Medical Monitors should remain vigilant about protections described in blinding guidance and ensure processes exist to limit unblinding exposure.

6) Repeated site errors with no corrective mechanism

If the same site repeatedly misses awareness timestamps or provides incomplete narratives, you need corrective action, not more reminders. Link repeated issues to retraining and process fixes, and ensure alignment with broader quality systems used in audit/inspection readiness.

A Medical Monitor becomes “essential” when they stop these failure modes before they snowball into findings.

5. Medical Monitor AE Review Toolkit: Templates, Decision Rules, and Integration with PV Outputs

To make AE review scalable, you need reusable tools. Here are the highest-impact components.

1) “One-page case” template (for every serious or complex AE)

Require a standardized summary that includes:

timeline spine (onset → awareness → escalation → outcome)

seriousness criteria mapping

severity grade justification

causality logic (timing, mechanism, alternatives, dechallenge)

action taken with IP and concomitant interventions

follow-up plan with deadlines

This is the fastest way to build inspection-ready narratives that integrate cleanly into pharmacovigilance workflows like regulatory submissions in PV and aggregate safety reports.

2) Escalation thresholds tied to governance (DMC/leadership)

Define triggers early, not after a signal becomes obvious. For example:

same severe event appearing across multiple sites

unexpected pattern by timing relative to dosing

mortality or life-threatening clusters

AESI threshold reached

Then align with the oversight structures described in DMC roles and sponsor safety leadership workflows.

3) Training feedback loop to sites

Medical Monitors should not “own” all quality corrections. Use repeated review gaps to feed training needs back into sites via CRC/PI training pathways:

This is how you convert “review” into prevention.

4) Documentation integration with CRF and source expectations

Your case story must match the CRF structure described in CRF definition, types, and best practices. If the CRF fields cannot support the clinical nuance, you need a controlled narrative supplement—not inconsistent ad hoc notes.

Finally, consider the broader staffing and capability ecosystem. Strong Medical Monitor performance also depends on the quality of the teams around you—sites and CRO partners often sourced through clinical research staffing agencies and supported by ongoing upskilling via continuing education providers and certification provider comparisons. AE review maturity is a workforce maturity problem as much as it is a process problem.

6. FAQs: Managing Adverse Event Reviews for Medical Monitors

-

A clean, evidence-backed timeline spine (onset → awareness → escalation → interventions → outcome) plus consistent seriousness/causality rationale. Without timeline discipline, even clinically reasonable conclusions become hard to defend against drug safety reporting timelines.

-

Causality can evolve as evidence arrives, but any change must be justified with specific new data (diagnostics, dechallenge, alternative etiology confirmation). Unexplained changes look like control failure and weaken aggregate analyses used in PV aggregate reports.

-

Teams wait for complete details instead of escalating at first awareness. Medical Monitors should reinforce “escalate first, complete later,” consistent with AE reporting fundamentals and CRC reporting techniques.

-

Use targeted follow-up templates, publish examples of “gold standard” narratives, and trigger retraining when the same gaps repeat. Tie training to CCRPS role guides like CRC responsibilities and PI oversight topics like patient safety oversight.

-

Patterns that indicate a credible emerging signal: clustering by syndrome/timing/site, unexpected severity, life-threatening outcomes, or AESI threshold breach. Align escalation logic with DMC roles in trials.

-

Standardize causality reasoning, enforce the timeline spine, use a shared triage rubric, and require justification for classification changes. Consistency is what makes downstream PV outputs—like regulatory PV submissions—credible.