Mastering Remote & On-Site Monitoring Visits as a CRA

Monitoring visits are where a CRA’s judgment becomes visible. It is one thing to understand GCP compliance essentials for CRAs, site selection and qualification, and clinical trial auditing readiness in theory. It is another to walk into a stressed site or open a remote review portal and quickly determine whether the study is under control, where the risks are hiding, and which findings threaten data integrity or patient safety. Strong monitoring is not about checking boxes faster. It is about seeing patterns early, asking sharper questions, documenting precisely, and guiding sites toward durable correction.

1. What Great CRA Monitoring Actually Looks Like in Remote and On-Site Visits

A strong CRA does far more than verify data points against source. Great monitoring means evaluating whether the site is protecting participants, following the clinical trial protocol, maintaining defensible clinical trial documentation, complying with good clinical practice, and escalating issues before they evolve into serious findings. Whether the visit is remote or on-site, the mission stays the same: verify rights, safety, data credibility, investigational product control, and site process stability through evidence rather than assumption.

Many new CRAs think on-site visits are the “real” monitoring work and remote visits are a lighter version of the same task. That mindset creates blind spots. Remote monitoring demands tighter pre-review discipline, stronger written communication, sharper prioritization, and more deliberate evidence requests because you cannot rely on casual hallway clarification or quick access to paper binders. On-site monitoring gives broader situational awareness, but it also tempts weak monitors into wasting time on logistics, friendly conversation, or low-yield document checks while higher-risk issues sit unchallenged. In both modes, the real skill is risk-based attention guided by monitoring terms every CRA should know, GCP guidelines mastery, ICH principles, and the operational realities of investigator site management.

Great monitoring begins before the visit. A CRA should enter every review with a risk map: enrollment status, protocol amendments, unresolved action items, prior findings, data-entry lag, open queries, safety events, investigational product concerns, staff turnover, delegation changes, subject withdrawals, and trends in deviations. That map shapes the visit scope and prevents the classic failure of treating every subject and every document as equally urgent. The monitor who understands how protocol deviations, serious adverse events, informed consent requirements, and site documentation control interact can identify risk faster than the monitor who simply follows a generic checklist.

Another mark of great monitoring is how findings are framed. Weak CRAs identify errors. Strong CRAs explain what the error signals about the site’s system. A missing consent signature may reveal rushed intake workflow. Repeated out-of-window visits may point to broken scheduling controls. Delayed AE entry may indicate weak reconciliation between source and safety review. Inaccurate IP counts may expose deeper accountability failures. When a CRA writes and communicates findings this way, sites understand the problem in operational terms instead of treating each issue as isolated bad luck.

Finally, great monitoring is not punitive. It is disciplined, skeptical, and exacting, but it is also constructive. The best CRAs do not soften real issues, yet they help sites understand what must change. They convert scattered observations into a coherent risk picture. That is what makes a monitor valuable to sponsors, sites, and ultimately to patients.

Remote vs On-Site Monitoring Visit Control Matrix for CRAs (30 Critical Checks)

Use this matrix to decide what to verify, what to escalate, and what remote review often hides until it becomes a sponsor problem.

| # | Monitoring Area | What a Strong CRA Checks | Remote Visit Risk | On-Site Visit Risk |

|---|---|---|---|---|

| 1 | Visit scope planning | Subject selection tied to risk, not routine | Overreviewing low-risk data | Time lost to uncontrolled agenda drift |

| 2 | Prior action items | Evidence of closure, not verbal reassurance | Missing proof in uploads | Accepting hallway explanations without documentation |

| 3 | Enrollment status | Active, withdrawn, screened, completed subjects reconciled | Lagging trackers | Outdated paper logs not cross-checked |

| 4 | Delegation log | Current roles, signatures, dates, qualified staff linkage | Partial scans hide timing gaps | Unfiled versions sitting outside binder |

| 5 | Training records | Protocol, amendment, system, safety training current | Missing certificates not uploaded | Site says training occurred but records incomplete |

| 6 | IRB approvals | Current approval periods and implemented documents match use | Version confusion across folders | Old stamped copies mixed into active files |

| 7 | Consent forms | Correct version, dates, signatures, timing before procedures | Scan quality obscures dates or initials | Paper packet disorder hides missing pages |

| 8 | Consent process evidence | Source supports discussion and subject opportunity to ask questions | Only final form visible | Overreliance on coordinator verbal explanation |

| 9 | Eligibility review | Every inclusion/exclusion criterion supported by source | Key records withheld for privacy workflow reasons | Rushed review due to large chart volume |

| 10 | Protocol window compliance | Procedures completed within allowed timing | Scheduler reports not shared | Late discovery after manual chart navigation |

| 11 | Source completeness | ALCOA-style documentation quality and correction clarity | Fragmented uploads miss context | Physical charts separated across departments |

| 12 | Source-to-EDC comparison | High-risk fields, dates, outcomes, and status aligned | Slow navigation reduces depth of review | Distraction from easy but low-yield SDV |

| 13 | Open query aging | Responses timely and evidence-based | Site delays visible but cause unclear | Site explains verbally but backlog remains |

| 14 | Protocol deviations | Logged, assessed, reported, trended, and prevented | Unreported drift hidden in records | Site normalizes repeat misses during conversation |

| 15 | AE capture | Clinic notes, hospitalizations, calls, and meds reconciled | Scattered files conceal missed events | Volume of paper source slows reconciliation |

| 16 | SAE reporting | Awareness date, report date, narrative, and docs align | Email trails incomplete | Site may describe urgency better than records show |

| 17 | Concomitant medications | Source, AE logic, and EDC consistency | Medication lists unavailable in portal view | Manual review fatigue misses discrepancies |

| 18 | Lab review | Normal ranges, PI review, flags, and action documentation | Missing reference ranges | Unsorted paper lab packets hide follow-up gaps |

| 19 | Vendor-dependent procedures | Central labs, imaging, ECG, ePRO completion tracked | Portal fragmentation | Local printouts disconnected from sponsor systems |

| 20 | Investigational product receipt | Shipments, temperatures, and receipt records reconcile | No visual confirmation of storage context | Site workflow interruptions delay pharmacy access |

| 21 | IP accountability | Dispense, return, destruction, and balance exact | Scans may hide handwriting issues | On-site recount may expose bigger mismatch than logs suggest |

| 22 | Temperature monitoring | Continuous records, excursions, and disposition traceability | Large logs not uploaded fully | Site may keep side logs outside official files |

| 23 | Regulatory binder health | Current CVs, licenses, lab certs, correspondence, amendments | Folder sprawl obscures completeness | Binder appearance can falsely reassure |

| 24 | PI oversight | Medical review, sign-off, and involvement visible in records | Harder to assess actual oversight culture | Charm of site leadership can mask documentation weakness |

| 25 | Site workflow maturity | Scheduling, escalation, filing, query response, and quality controls | Process weakness easier to miss remotely | Monitor may observe only staged readiness |

| 26 | Corrective action follow-up | Prior CAPA changed behavior, not just paperwork | Need evidence beyond screenshots | Staff assurances feel convincing without proof |

| 27 | Document request discipline | Precise list sent early with deadlines | Late uploads destroy review efficiency | Too much real-time pulling wastes visit hours |

| 28 | Communication quality | Questions framed clearly and findings discussed directly | Email ambiguity slows resolution | Informal conversation can blur what is official |

| 29 | Monitoring notes | Real-time issue tracker with evidence links | Screen fatigue reduces note quality | Fast site pace causes note gaps |

| 30 | Follow-up letter accuracy | Findings specific, risk-based, and action-oriented | Too generic after document-heavy review | Too delayed after travel and multiple visits |

2. How to Prepare for a Monitoring Visit So You Arrive Already Thinking Like a Risk Manager

Preparation is where most monitoring visits are won or lost. A CRA who starts real analysis after the visit begins is already behind. Before a remote review or an on-site visit, examine the study status with the same seriousness you would apply to an inspection readiness review or a high-risk site qualification assessment. Look at open queries, data-entry delays, prior monitoring letters, protocol amendments, subject disposition, staffing changes, deviation trends, serious and non-serious safety events, IP issues, and any site communication that suggests rising operational strain.

The first job is setting visit objectives. A monitoring visit without clear objectives turns into a document harvest. Decide which subjects require deeper review and why. Newly enrolled participants, subjects with SAEs, participants with repeated out-of-window visits, screen failures with eligibility ambiguity, discontinued subjects, subjects affected by amendments, and any case involving IP discrepancies should rise to the top. This is where strong CRAs draw on adverse event reporting knowledge, protocol deviation handling, informed consent mastery, and the operational awareness behind CRA role skills and career path.

For remote visits, preparation must be even tighter. Send a focused request list early. Specify which source records, logs, consent packets, lab documentation, accountability records, training files, and correspondence you need. Ask for them in an order that supports efficient review instead of forcing the site to guess. Poor remote monitoring often fails before it starts because the CRA requests too much, too vaguely, too late. Then the site uploads partial evidence, key timestamps are missing, and the monitor wastes hours reconstructing context. A strong remote CRA uses document requests like a scalpel.

For on-site visits, the preparation burden shifts slightly. The site may have broader access to documents during the visit, but that does not justify vague planning. Define what you need from pharmacy, regulatory, and clinical staff. Identify the specific accountability periods to reconcile, the subject charts to review, and the unresolved items from prior correspondence. This reduces one of the most common on-site failures: spending half the day waiting for binders, signatures, or access while high-risk review time disappears.

Preparation also means anticipating where explanations may be weak. If the site has had delayed queries and recent staff turnover, you should expect documentation inconsistency. If the site implemented a protocol amendment recently, you should expect risk around reconsent, retraining, updated procedures, and version control. If the site enrolled rapidly after being slow, you should look for pressure cracks in consent workflow, eligibility confirmation, and documentation lag. This is the difference between passive review and true monitoring judgment.

Finally, prepare your note structure before the visit. Organize by subject, issue category, and follow-up item. The CRA who improvises note architecture mid-review tends to miss connections and write weaker letters later. Strong note discipline leads to stronger findings, cleaner follow-up, and more defensible sponsor communication.

3. Mastering the Actual Visit: How CRAs Should Review, Question, and Document in Real Time

During the visit, the CRA’s job is not to move linearly through documents. It is to test whether the site’s story holds together. A subject was eligible. A visit occurred on time. A lab was reviewed. An AE was captured. An IP dose was dispensed and returned accurately. A protocol amendment was implemented correctly. These are not claims to accept. They are claims to verify through linked evidence. The strongest monitors move between source, logs, EDC, accountability records, training documents, and communication trails with discipline, using monitoring techniques, documentation best practices, GCP essentials, and clear awareness of clinical trial sponsor responsibilities.

When reviewing consent, do more than confirm signatures. Check version control against IRB approval dates. Verify consent happened before study procedures. Look for source support showing the conversation occurred appropriately. Review reconsent after amendments. Many sites can produce signed forms. Fewer can prove a compliant consent process. Great CRAs understand the distinction and evaluate it using the logic behind informed consent best practices, IRB responsibilities, and broader research ethics and compliance.

Eligibility review should be criterion based, not impression based. Walk through each inclusion and exclusion criterion and confirm source support existed before enrollment or randomization. When evidence is partial, vague, or retrospective, do not let convenience turn that into acceptance. Ineligible subjects create downstream damage to safety interpretation and dataset credibility. This is where strong CRA discipline intersects with protocol interpretation, endpoint understanding, and clean case report form practice.

Safety review is where many visits reveal hidden site weakness. Compare clinic notes, hospitalization records, concomitant medications, subject calls, and lab abnormalities against AE and SAE records. Look for events that were recorded clinically but never entered as AEs, delayed reporting timelines, incomplete follow-up, and PI oversight gaps. Sites often think they have a safety problem only when an SAE is missed. In reality, repeated weak AE reconciliation may be the bigger signal. Strong CRAs bring together AE reporting, SAE procedures, drug safety reporting requirements, and pharmacovigilance basics into one coherent review.

For IP review, count as if the numbers matter because they do. Reconcile shipments, receipt logs, dispense records, returns, destruction, and current balance. Review temperature logs and excursion handling. If the numbers are off, resist the urge to let the site “double-check later” before you fully document the discrepancy. A mismatch can be a clerical issue. It can also be a serious control failure. The monitor’s job is to see the difference through evidence.

Throughout the visit, ask direct questions with precise framing. “Help me understand how this missed visit window was identified and handled.” “What control ensures outdated consent versions cannot be used?” “Who reconciles AE source against hospitalization records?” “When staff changed, how was delegation and training updated before procedures continued?” Questions like these expose systems. Weak questions invite vague reassurance.

And document in real time. Do not trust memory to preserve nuance after five charts, two pharmacy discussions, and a closing meeting. Good notes capture the fact pattern, the associated risk, what evidence was reviewed, what remains pending, and what the site said. That precision becomes your protection later.

What is your biggest CRA monitoring challenge right now?

Pick the pressure point that most often turns a visit into a weak one.

4. Remote Monitoring vs On-Site Monitoring: What Changes, What Stays the Same, and What Each Mode Hides

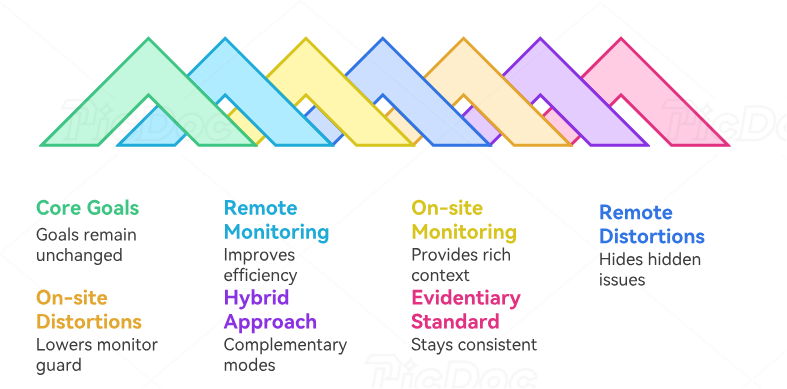

The core goals of monitoring do not change when the visit format changes. The CRA still must verify participant protection, protocol compliance, data integrity, IP control, and site process quality. What changes is the type of visibility available and the kind of discipline required. Remote monitoring often improves efficiency for targeted document review, query follow-up, and interim oversight. On-site monitoring provides richer context, direct access to staff, and stronger evaluation of how the site actually functions. Mature CRAs know when each mode is strong and where each one lies to you.

Remote monitoring can create the illusion of clarity because documents arrive in neat digital folders. That organization may be real, or it may simply be curated. Missing uploads, fragmented source, absent context, scan-quality issues, and selective document availability can hide serious problems. A remote CRA must therefore be more demanding about chronology, completeness, and supporting records. Request the surrounding note, not just the single page. Request the reference ranges, not just the lab value. Request the accountability period, not just the summary line. This approach aligns with the rigor needed for clinical data management, EDC review, and stronger remote tool awareness.

On-site monitoring brings its own distortions. Being physically present can make a site feel more transparent than it really is. Friendly staff, quickly produced binders, and an organized conference room can lower a monitor’s guard. Yet many deeper issues only appear when you compare records carefully: recurring missed procedures, weak PI oversight evidence, outdated delegation, incomplete follow-up on abnormal labs, or repeated deviation patterns buried across subjects. On-site presence should increase scrutiny, not soften it.

Remote visits are usually better for focused, preplanned review with strong digital systems. They work well when the site uploads quality records on time, when access controls are stable, and when the CRA defines scope clearly. On-site visits are usually stronger for IP accountability, pharmacy review, process assessment, training verification, staff interaction, and any situation where site dysfunction is suspected but not yet well documented. They are also valuable when recent protocol amendments, staffing instability, or serious findings suggest broader process breakdown.

The smartest CRAs treat remote and on-site work as complementary rather than hierarchical. Remote review can surface trends, prioritize subjects, close routine items, and prepare the monitor to use on-site time where it matters most. On-site work can then validate whether the apparent story from remote materials matches actual site conduct. This hybrid mindset reflects the broader evolution of clinical trial technology, CTMS and EDC infrastructure, and risk-based oversight in modern studies.

What should stay the same across both modes is your evidentiary standard. Do not lower it because the review is remote. Do not lower it because the site is cooperative on-site. Rights, safety, data, and compliance still need proof. Great monitors keep that standard stable even when the visit format changes.

5. Turning Monitoring Findings Into Better Sites Instead of Repeat Problems

A monitoring visit is only as good as its follow-up. CRAs who identify issues but write vague letters or accept cosmetic responses create repeat findings, sponsor frustration, and sites that learn how to survive visits without actually improving. Strong follow-up begins with findings that are specific, evidence based, and operationally meaningful. “Documentation incomplete” is weak. “Visit 5 source lacked contemporaneous documentation of unscheduled AE follow-up and did not support the date entered in EDC” is useful. Specificity gives the site something real to fix.

Each finding should show why it matters. Did it affect participant safety, informed consent validity, eligibility confirmation, timing of required assessments, accuracy of safety reporting, or data credibility? This matters because sites prioritize what they understand. If the CRA explains only the surface defect, the site often responds with surface correction. When the underlying risk is clear, the corrective action is more likely to reach the workflow level. This is the same logic behind strong audit and inspection readiness, quality-focused protocol deviation management, and effective stakeholder communication.

CRAs should also learn to recognize weak corrective actions. “Staff retrained” is not a serious answer unless it is tied to a defined process change, ownership, timeframe, and verification step. If the site used the wrong consent version, how is version control now being managed? If visit windows were missed repeatedly, what scheduling trigger or pre-visit review now prevents that? If AE reporting was late, what reconciliation checkpoint has been added? Strong monitors ask whether the corrective action makes recurrence harder. If it does not, the CAPA is probably shallow.

Another advanced monitoring skill is trend memory. A single issue may look minor in one visit and serious across three. Keep a running understanding of site patterns: repeat timing failures, recurring delegation sloppiness, persistent query backlog, PI oversight documentation gaps, repeated IP reconciliation issues, or amendment implementation weakness. Sites often experience their problems as isolated episodes. The CRA is the person positioned to see the pattern. That pattern view is one of the most valuable contributions a monitor can make.

Closing meetings matter too. Use them to make priorities unmistakable. Do not flood the site with ten equal-sounding concerns when three are clearly more important. Distinguish critical from annoying. Explain what needs immediate action, what requires proof, and what you will verify at the next review. Strong closing communication prevents the classic site complaint that the letter “made everything sound equally urgent.”

Finally, follow-up should be timely. A beautifully reasoned letter sent too late loses force. Delay weakens detail, blurs urgency, and trains sites to treat monitoring follow-up as background noise. Great CRAs respect the visit enough to close the loop while the evidence is still fresh and the site’s attention is still focused.

6. FAQs About Mastering Remote and On-Site Monitoring Visits as a CRA

-

The biggest difference is not technical knowledge alone. It is risk judgment. A weak monitor reviews what is easy to review and reports what is easy to describe. A strong monitor identifies what matters most, verifies it through linked evidence, and explains findings in a way that forces meaningful correction. That skill grows from mastering CRA monitoring techniques, GCP essentials, and deeper CRA role responsibilities.

-

Remote monitoring can be highly effective for focused review, data follow-up, trend analysis, and targeted document verification when the scope is clear and the site provides strong digital access. On-site monitoring is stronger for process observation, IP review, broader document access, and assessing how the site really functions under operational pressure. Effectiveness depends less on format and more on planning, evidence quality, and CRA discipline.

-

Start with risk. Review unresolved action items, subjects with safety events, recent enrollments, protocol deviations, delayed data entry, amendments, staffing changes, and any area where prior visits showed weakness. Let risk determine sequence. This approach is far stronger than beginning with whatever record the site hands you first.

-

Improve remote monitoring by sending precise document requests early, narrowing review scope to risk-based priorities, demanding full context around key records, tracking missing evidence aggressively, and taking structured notes during review. Remote visits fail when the CRA allows fragmented uploads and vague follow-up to become the working norm.

-

Issues involving informed consent failures, unsupported eligibility, delayed SAE reporting, significant protocol deviations, major IP accountability discrepancies, participant safety concerns, and repeated evidence of weak PI oversight deserve rapid escalation. These are the findings most likely to damage patient protection, data credibility, or sponsor trust.

-

Write findings specifically, explain the risk behind them, reject cosmetic CAPAs, track repeat patterns over time, and verify that the site changed workflow rather than just updated paperwork. Monitoring creates improvement only when the feedback is sharp enough to reshape behavior.