Clinical Trial Data Review & Verification: Key CRA Skills

Clinical trial data review is where CRA judgment becomes visible. Anyone can compare a field against a source note; skilled monitors know which inconsistencies threaten eligibility, endpoints, safety reporting, inspection readiness, and sponsor trust. A strong CRA connects clinical research associate responsibilities, GCP compliance for CRAs, case report form accuracy, and clinical trial documentation control into one disciplined review process that protects the trial before small data problems become regulatory findings.

1. Why Clinical Trial Data Review Requires More Than Field Checking

A CRA reviewing data is making a risk judgment every time they look at a source note, lab result, consent form, eCRF field, deviation log, adverse event entry, or monitoring follow-up item. The goal is to prove that the trial story is traceable from patient visit to source record to CRF documentation, from source record to clinical trial protocol requirements, and from protocol requirements to GCP inspection expectations.

The dangerous CRA habit is treating every field with equal intensity. High-value review starts with critical data: eligibility, primary endpoint variables, safety events, dosing, informed consent, visit windows, prohibited medications, and any data used for statistical analysis. Those areas connect directly to primary and secondary endpoints, serious adverse event reporting, informed consent procedures, and protocol deviation management.

Data review also separates a passive monitor from a decision-ready CRA. A passive monitor says “query opened.” A decision-ready CRA says the site entered the wrong visit date, that date changes the visit window calculation, the out-of-window visit affects endpoint validity, the pattern suggests coordinator retraining, and the monitoring report needs a corrective action tied to CRC protocol management, CRC documentation practices, and GCP compliance strategies.

| Data Area | What the CRA Verifies | Risk Signal | Strong CRA Action |

|---|---|---|---|

| Informed consent | Correct version, signatures, dates, timing before procedures, re-consent needs | Procedure performed before consent or wrong form version | Escalate immediately and align with informed consent essentials |

| Eligibility criteria | Inclusion and exclusion criteria against source, labs, history, medications | Eligibility supported by assumptions instead of documented evidence | Request source clarification and assess impact on protocol compliance |

| Randomization | Subject randomized only after eligibility completion and required approvals | Randomization before final lab, washout, consent, or physician review | Verify audit trail and compare with randomization techniques |

| Blinding | Unblinded access, accidental disclosure, drug accountability segregation | Source notes reveal assignment or unblinded staff document clinical judgments | Escalate to study team using blinding principles |

| Visit windows | Visit dates, allowed ranges, missed assessments, rescheduling documentation | Dates entered correctly but protocol window calculations ignored | Open targeted query and assess amendment impact |

| Primary endpoint data | Endpoint assessment timing, qualified assessor, source support, unit consistency | Endpoint value present in EDC with weak source trail | Prioritize review because endpoint errors affect trial conclusions |

| Secondary endpoints | Assessment completeness, scale scoring, device timestamps, repeat assessments | Partial completion treated as evaluable data | Confirm scoring rules and document issue in monitoring follow-up |

| Adverse events | Onset date, severity, seriousness, causality, outcome, action taken | Symptoms in notes missing from AE log | Reconcile against AE identification and reporting |

| Serious adverse events | SAE criteria, sponsor notification, IRB reporting, follow-up documentation | Hospitalization mentioned without SAE assessment | Escalate urgently using SAE reporting procedures |

| Concomitant medications | Start/stop dates, indication, dose, prohibited medication status | Medication explains AE or exclusion issue but stays unreconciled | Compare against protocol restrictions and AE narrative |

| Medical history | Baseline conditions, ongoing status, relationship to eligibility and AEs | Baseline disease later reported as new AE | Clarify onset and status with site source documentation |

| Laboratory data | Collection date, fasting status, units, normal ranges, clinical significance | Abnormal result lacks investigator assessment | Require PI review and align with patient safety oversight |

| Vitals | Timing, position, repeat measures, outlier explanation, device consistency | Outlier removed or averaged without source justification | Ask site to document source rationale and EDC correction trail |

| ECG or imaging | Timing, reader, report availability, abnormal finding follow-up | Report exists but clinical significance field is blank | Confirm investigator assessment and endpoint relevance |

| Study drug dosing | Dose, date, time, interruptions, missed doses, compliance calculations | Dosing gap hidden inside accountability totals | Reconcile dosing source, diary, accountability, and AE data |

| Drug accountability | Dispensed, returned, destroyed, lot numbers, temperature excursions | Returned count conflicts with dosing record | Trace accountability against visit record and pharmacy logs |

| Protocol deviations | Deviation date, description, root cause, corrective action, preventability | Issue corrected in EDC but missing from deviation log | Connect finding to deviation compliance strategy |

| Source corrections | Dated, initialed, legible, reason where needed, original entry visible | Overwritten source or late reconstruction | Assess ALCOA-C risk and retrain site staff |

| eCRF changes | Audit trail, reason for change, query history, data ownership | Repeated changes after sponsor prompts without source update | Compare EDC trail with source and monitoring notes |

| Queries | Open aging, repetitive issues, vague answers, inconsistent closures | Site closes queries with “confirmed” and no source support | Write evidence-based re-query and document trend |

| Subject diaries | Completion dates, missing entries, transcription accuracy, device sync | Diary data manually entered without discrepancy explanation | Verify against source and ePRO expectations |

| Patient-reported outcomes | Timing, completion by subject, language/version, missing items | Staff-completed response or assessment outside window | Determine evaluability and escalate endpoint risk |

| Device data | Calibration, timestamps, uploads, missing files, subject assignment | Device ID mismatch or upload gap | Document issue and request vendor/site reconciliation |

| Lab kit tracking | Collection time, shipment, temperature, accession numbers, missing samples | Sample collected but absent from central lab portal | Reconcile site log, courier record, and lab database |

| Investigator oversight | PI review, delegation, safety sign-off, protocol decisions | Coordinator decisions appear without PI confirmation | Assess against PI responsibilities |

| Delegation log | Task authorization before work performed, training completion, role fit | Staff entered endpoint or consent data before delegation | Open finding tied to GCP training requirements |

| Regulatory binder | IRB approvals, CVs, licenses, training, protocol versions, correspondence | Data generated under expired approval or missing documentation | Connect to regulatory document management |

| Monitoring follow-up | Issue ownership, due dates, repeat findings, evidence of closure | Same issue appears across visits with weak CAPA | Escalate trend through sponsor communication and site management |

2. The CRA Workflow for Data Review, SDV, SDR, and Risk-Based Verification

A strong CRA starts before the visit by reviewing the monitoring plan, protocol, prior monitoring letter, data listings, open queries, deviation trends, missing pages, and safety reports. That preparation connects site monitoring techniques, investigator site management, clinical trial auditing readiness, and sponsor role expectations into a visit plan.

Source data verification confirms that eCRF data matches source. Source data review asks whether source itself is adequate, attributable, contemporaneous, original, accurate, complete, consistent, enduring, and available. The CRA who understands this difference catches the hidden risks: copied-forward visit notes, missing investigator assessment, reconstructed eligibility support, endpoint source gaps, and weak correction practices. This is where GCP guidelines, clinical trial documentation, CRF best practices, and CRC responsibilities must work together.

The best workflow is simple: identify critical data, verify high-risk fields first, compare across sources, document discrepancies cleanly, query only what needs formal correction, escalate what affects subject safety or data integrity, and confirm that corrective action prevents recurrence. A CRA should never spend the best hours of a visit chasing low-value cosmetic fields while eligibility errors, AE reconciliation gaps, and dosing inconsistencies sit untouched. The priority lens should come from risk management in clinical trials, data monitoring committee responsibilities, clinical data management systems, and EDC system review.

A high-performing CRA also separates data discrepancy from process weakness. One wrong date may require a query. Ten wrong dates across three subjects require retraining, root-cause analysis, and follow-up in the monitoring report. One missed AE entry may be a site oversight. Several symptom notes missing from the AE log may show that the site does not understand adverse event handling, drug safety reporting timelines, pharmacovigilance basics, and medical monitor review expectations.

3. The Highest-Risk Data Areas CRAs Must Review With Extra Discipline

Eligibility is the first pressure point because enrolling the wrong subject can compromise safety, analysis populations, and regulatory credibility. The CRA should verify every inclusion and exclusion criterion using direct source evidence, including labs, imaging, disease history, washout periods, prohibited medications, prior therapies, pregnancy testing, consent timing, and investigator judgment. This review depends on protocol interpretation, phase-specific trial requirements, principal investigator oversight, and IRB responsibilities.

Safety data is the second pressure point because underreported symptoms can become inspection findings, delayed SAEs, incomplete narratives, and weak benefit-risk evaluation. CRAs should compare progress notes, phone calls, unscheduled visits, hospital records, lab abnormalities, medication changes, and study discontinuation reasons against the AE and SAE modules. The review should tie together AE management, SAE definitions, patient safety oversight, and clinical trial medical oversight.

Endpoint data is the third pressure point because a beautiful database with weak endpoint support gives the trial a false sense of cleanliness. The CRA must verify assessment timing, assessor qualification, instrument version, scale scoring, missing items, source location, and audit trail changes. For endpoint-heavy studies, the monitor should understand biostatistics in clinical trials, sample size logic, randomization and blinding tools, and placebo-controlled trial design.

Visit timing and dosing data deserve the same discipline because they quietly drive deviation patterns. A subject may have a correct eCRF date, a signed visit note, and a completed assessment, while the true issue sits in the protocol window calculation or missed pre-dose timing. CRAs should reconcile visit schedules with dosing logs, lab collection times, diary completion, treatment interruption, and accountability records. This requires practical command of protocol deviations, clinical trial amendments, study documentation skills, and site qualification expectations.

What is the biggest data review risk in your monitoring work right now?

Choose the issue that most often turns a “clean” visit into a late-night follow-up problem.

4. Query Management, Escalation, and Documentation: Where CRA Skill Shows Up Fast

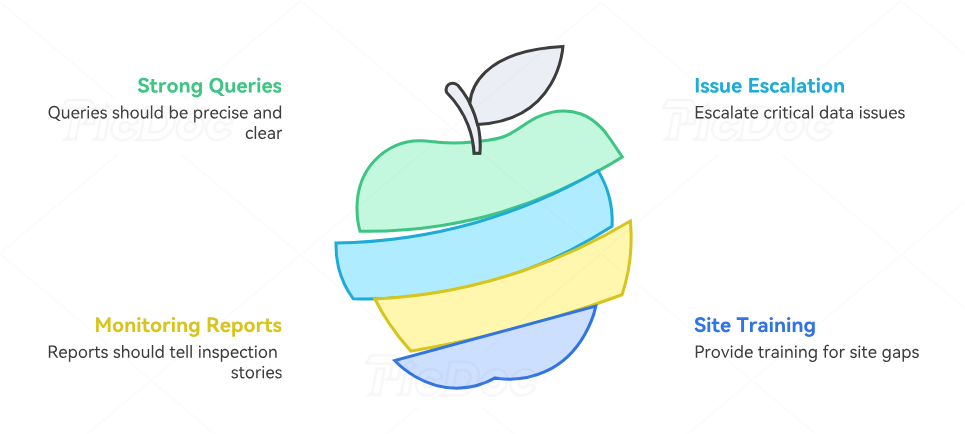

A query should be precise enough that the site knows what evidence is missing, why the field may be wrong, and which source record needs review. Weak queries create weak answers: “confirmed,” “updated,” “per source,” or “data correct.” Strong queries name the discrepancy, cite the relevant field, request the missing support, and avoid coaching the site toward a desired answer. That discipline supports effective data collection, clinical data manager priorities, EDC workflows, and clinical trial technology review.

The CRA should escalate when a data issue affects subject safety, eligibility, primary endpoint reliability, regulatory compliance, or repeated site performance. Escalation should describe the problem, impact, evidence, root cause if known, immediate containment, owner, due date, and prevention plan. This approach turns monitoring from a checklist activity into a trial protection function. It also connects with vendor management, stakeholder communication, resource allocation, and clinical trial project planning.

Monitoring reports should capture the inspection story. A report that says “SDV completed” tells very little. A stronger report explains what data was reviewed, what critical issues were found, how the site responded, what remains open, what was escalated, and which follow-up action will verify prevention. The CRA should document trends clearly because regulators and sponsors care about patterns, especially repeated failures in consent timing, AE capture, PI oversight, source correction, delegation, and endpoint documentation. These areas connect with inspection readiness, audit preparation, regulatory submissions, and clinical research ethics resources.

The best CRAs also know when a site needs training rather than another query. If the site repeatedly misses AE reconciliation, the monitor should review AE detection examples. If deviation logs lag behind EDC corrections, the monitor should walk through deviation identification. If endpoint source is weak, the monitor should clarify what counts as original source, who performs the assessment, and how missing data should be documented. This connects directly to CRC certification topics, CRC exam practice, CRA certification mistakes, and CRA exam preparation.

5. Key CRA Skills That Turn Data Review Into Trial Protection

The first skill is pattern recognition. A CRA must see that missing AE entries, late PI reviews, vague source notes, and repeated query closures may share one cause: the site does not understand what “documented evidence” means under the protocol. Pattern recognition grows through review of top CRA monitoring terms, essential CRA terminology, clinical research terms, and GCP principles.

The second skill is cross-document reconciliation. CRAs should compare EDC, source notes, lab portals, imaging reports, diaries, ePRO tools, medication lists, pharmacy logs, deviation logs, delegation logs, and correspondence. Many serious findings hide between systems. A hospitalization in a phone note, a new antibiotic in con meds, and an abnormal lab may each look minor alone; together, they may require AE or SAE review. This skill improves through clinical trial documentation training, remote patient monitoring tools, ePRO tool awareness, and medical writing tools.

The third skill is data impact thinking. A CRA should ask what the discrepancy changes: safety reporting, eligibility, dosing, endpoint validity, analysis population, protocol compliance, investigator oversight, or inspection defensibility. This prevents the common monitoring mistake of treating every discrepancy as a clerical issue. Data impact thinking depends on clinical trial phases, phase II objectives, phase IV follow-up, and ICH guideline literacy.

The fourth skill is site coaching. Great CRAs correct the data and strengthen the process that produced the data. That means teaching the coordinator how to document eligibility, reminding the PI where oversight must be visible, helping the site distinguish AE from medical history, and explaining why source corrections must preserve the original entry. This skill connects with CRC role mastery, research assistant documentation skills, PI leadership strategies, and site monitoring visits.

The fifth skill is calm escalation. Data review often exposes uncomfortable truths: a subject should never have been enrolled, a safety event was missed, the PI signed late, a coordinator backfilled notes, or a primary endpoint lacks source support. The CRA’s job is to make the issue visible without exaggeration, document the evidence without emotion, and drive correction without losing site cooperation. That professional balance reflects CRA career readiness, clinical research certification standards, continuing education planning, and clinical research career portals.

A CRA who masters data review becomes valuable because sponsors trust their judgment. Sites trust their clarity. Clinical data teams trust their findings. Medical monitors trust their safety escalation. Project managers trust their trend detection. That is why data verification belongs at the center of CRA skill development, beside monitoring technique, GCP compliance, documentation accuracy, and inspection readiness.

6. FAQs: Clinical Trial Data Review & Verification for CRAs

-

SDV checks whether eCRF data matches source data. SDR evaluates whether source data itself is credible, complete, contemporaneous, attributable, and adequate for the protocol. A CRA using only SDV may confirm that a wrong or weak source entry was copied correctly. A CRA using SDR catches source weakness before it damages GCP documentation, CRF accuracy, protocol compliance, and inspection readiness.

-

A CRA should start with critical data: informed consent, eligibility, randomization, primary endpoints, safety events, dosing, visit windows, prohibited medications, and major protocol deviations. Those fields affect subject safety, trial validity, and regulatory defensibility. Lower-risk fields can follow after the CRA has protected areas linked to informed consent, endpoint review, SAE reporting, and protocol deviation control.

-

Repeated discrepancies should be treated as a process trend. The CRA should identify the root cause, document examples, retrain the site, assign ownership, set due dates, and verify prevention at the next visit. Repeated issues often signal weak delegation, poor source habits, unclear PI oversight, or limited coordinator training. The response should use site management strategies, CRC compliance training, PI oversight guidance, and monitoring report discipline.

-

A strong query identifies the exact inconsistency, requests the missing evidence, avoids leading language, and gives the site a clear path to correction. “Please confirm” creates poor query quality when the issue requires documentation. A stronger query asks the site to review the source record, update the eCRF if needed, or clarify the documented basis for the entry. Query strength improves with EDC system knowledge, clinical data management literacy, CRF best practices, and GCP documentation skills.

-

Escalation is appropriate when the issue affects safety, eligibility, primary endpoints, protocol compliance, data integrity, informed consent, blinding, or repeated site performance. The CRA should provide evidence, impact, urgency, owner, due date, and recommended corrective action. Strong escalation protects the study while keeping communication factual and controlled. This requires command of adverse event handling, blinding risk, clinical project communication, and risk management.

-

New CRAs should practice reviewing mock source against eCRFs, learn protocol-to-source mapping, study AE and SAE reconciliation, review common deviation examples, and understand how monitoring reports tell the inspection story. Confidence comes from repeated exposure to real discrepancies and clear escalation logic. The strongest learning path combines CRA exam preparation, CRA practice questions, CRA monitoring terms, and clinical research certification comparison.