Scientific Communication & Presentations: MSL Best Practices

MSLs don’t get trusted because they “sound smart.” They get trusted because they translate evidence into decisions—for KOLs, internal teams, and cross-functional partners—without distortion, overreach, or noise. If your slides feel ignored, your Q&A goes sideways, or you keep getting “nice deck” but no follow-up, the issue is rarely your knowledge. It’s your communication system: narrative, data handling, compliance guardrails, and delivery under pressure. This guide gives you a repeatable playbook for scientific presentations that win credibility, drive action, and keep you safe.

1) The MSL communication advantage: trust signals, not “presentation skills”

An MSL’s scientific communication is not “public speaking.” It’s field-to-strategy translation that must survive scrutiny from experts, compliance, and leadership—at the same time. The fastest way to level up is to treat every talk like a monitoring visit: a clear objective, documented assumptions, and a tight risk plan (what you will not say). If you want a strong frame for how roles differ, anchor your approach in the responsibilities and expectations across the ecosystem—especially the handoffs between MSL, CRA, CRC, PV, and DM. That means you should understand what drives site realities (CRC responsibilities & certification), how monitoring pressure shapes what stakeholders need (CRA roles, skills & career path), and how safety language must be handled (what is pharmacovigilance).

Here’s the hard truth: “great slides” don’t win KOLs. Credibility does. Credibility is built through:

Precision: You can define endpoints, populations, and limitations cleanly (primary vs secondary endpoints, placebo-controlled trials).

Method fluency: You explain why evidence is strong or weak without hedging to death (randomization techniques, blinding types & importance).

Operational realism: You respect how studies actually run (documentation, CRFs, data flows) (CRF definition & best practices, data monitoring committee roles).

Compliance discipline: You know what to do when the conversation drifts toward off-label, safety signals, or unverified claims (drug safety reporting timelines, aggregate reports in PV).

The MSL presentation “job to be done”

Every scientific presentation you give should produce one of these outcomes:

Clarity: stakeholders can repeat the conclusion and the caveats accurately.

Confidence: they know what the evidence supports and what it doesn’t.

Next step: a decision, a referral, a meeting, a follow-up question, or a study action.

If your talk doesn’t explicitly aim at one of those, it becomes a “data dump” that gets forgotten. That’s the core pain point behind “I’m doing strong work but I’m invisible” (a classic field problem that shows up in networking outcomes too—see the value of structured participation in professional communities and platforms like the clinical research networking groups directory and best LinkedIn groups directory).

2) Build the deck like a study: objective, population, endpoint, limitation, decision

MSLs get into trouble when they treat slides as “education.” Education is vague. Your deck must be a decision engine: it clarifies what matters, what the evidence supports, and what action should follow. Your best deck structure looks surprisingly similar to how trials are interpreted: population → design → endpoints → results → limitations → implications. If you keep that discipline, you naturally avoid the most common credibility killers: endpoint confusion (primary vs secondary endpoints), design misstatements (randomization techniques, blinding types), and overconfidence around controls (placebo-controlled trials).

The “one-sentence objective” (non-negotiable)

Before you open PowerPoint, write this sentence:

“After this presentation, the audience will be able to ___ because the evidence shows ___ within ___ limits.”

That last clause—within limits—is your trust signal. It shows you understand uncertainty and you’re not selling. If you struggle with stats wording, build your language off a simple base of effect + uncertainty (and keep a reference open to fundamentals like biostatistics overview).

Narrative architecture that wins expert rooms

Experts don’t need “storytelling” in the motivational sense. They need logic.

Use this order:

Clinical tension: what is hard right now (unmet need, heterogeneity, burden).

Decision point: where choices actually split (patient selection, sequencing, monitoring).

Evidence: what the study tested and what it found.

Boundaries: where the data stops, and what would change the conclusion.

This structure makes you resilient in Q&A because every slide has a job. And it’s how you keep cross-functional teams aligned—especially when data must flow from operational realities (CRFs, documentation standards) to interpretation (CRF best practices, DMC roles).

Slide design rules that signal “scientific adult”

If your deck looks “busy,” experts assume your thinking is busy.

Adopt these rules:

One slide = one claim. The claim must be testable or supportable.

Put the claim in the title (not a topic).

Show the proof (figure/table) and a one-line interpretation.

Put the caveat in the footer (not hidden in speaker notes).

Add a “what this changes” callout—because science without implication is trivia.

This is also where many MSLs feel stuck: “I don’t know what to say without sounding basic.” Your fix is to stop writing generic explanations and start writing decision implications tied to endpoints and limitations (primary vs secondary endpoints, biostatistics overview).

3) Data handling: how to explain evidence without over-claiming or under-selling

Scientific communication fails most often in the gap between what the data shows and what people want it to mean. Your job is not to shut down enthusiasm. Your job is to protect interpretation. The fastest way to do that is to build a repeatable “data integrity” routine—similar in spirit to GCP discipline and documentation rigor (useful anchors: GCP essentials for CRAs, handling audits, and documentation craft like managing clinical trial documentation).

The “Claim Ladder” (use it on every result)

Every result you present should climb this ladder:

Observation: what was measured, in whom, and when.

Comparison: what it was compared against (control, baseline, external benchmark).

Magnitude: effect size, not vibes (with uncertainty).

Reliability: design elements that protect validity (randomization, blinding) (randomization techniques, blinding types).

Boundary: what you cannot conclude (subgroups, endpoints not powered, short follow-up).

Implication: what a reasonable decision-maker can do next.

When you do this, you stop sounding basic because you’re not repeating the abstract. You’re teaching how to think about the evidence.

Subgroup and endpoint traps (where credibility dies)

KOLs often test MSLs with “gotcha” questions that aren’t malicious—they’re diagnostic. They want to see if you understand:

Whether an endpoint was primary or secondary (primary vs secondary endpoints).

Whether the study design supports causality, not association (placebo-controlled trials).

Whether the comparison is interpretable given blinding and bias controls (blinding types).

Your protection: pre-load caveats on the slide that contains the data. If you wait for Q&A, it looks like you “missed it.”

Safety language: be useful without becoming a liability

Safety questions are where anxious MSLs either freeze or over-talk. You don’t need to “know everything.” You need to:

Use clear definitions (AE vs SAE vs SUSAR depending on context).

Avoid specifics that could breach confidentiality or step into PV causality claims.

Escalate appropriately and document.

Even if your talk is not “about safety,” build a safety-ready posture grounded in PV timelines and governance (drug safety reporting timelines, aggregate reports in PV, mastering regulatory submissions in PV).

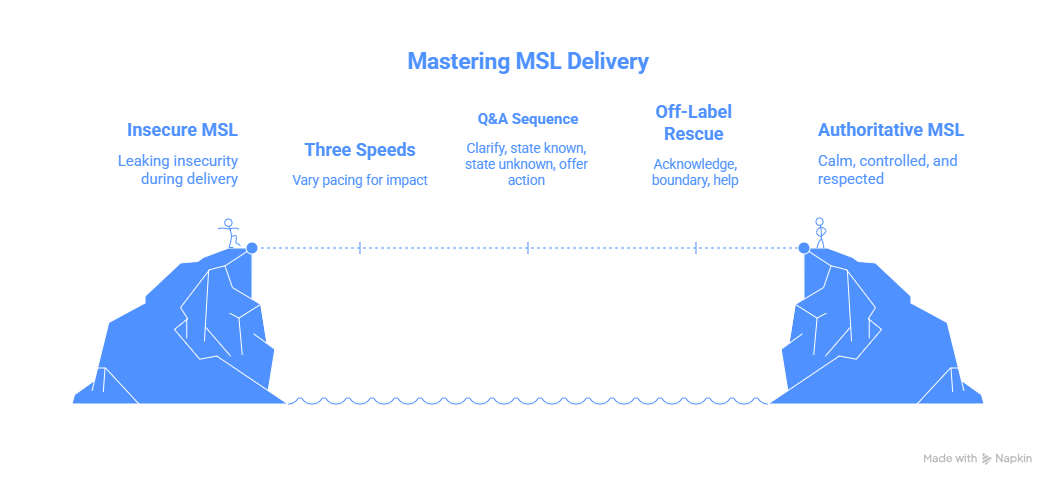

4) Delivery that earns authority: pacing, control, and elite Q&A

Delivery is where competent MSLs accidentally leak insecurity. The room doesn’t punish nerves; it punishes lack of control. Your goal is calm control—like a CRA running a clean visit: clear agenda, clean transitions, clean documentation logic (managing clinical trial documentation, clinical trial auditing & inspection readiness).

The “three speeds” method (prevents rambling)

Most presentations fail because everything is delivered at one speed.

Use three speeds:

Slow: definitions, endpoints, what was measured (primary vs secondary endpoints).

Normal: study flow, population, design protections (randomization techniques, blinding).

Fast: background, disease overview, anything the audience already knows.

This instantly makes you sound senior, because seniors respect time.

Q&A: the 4-step response that makes you unshakeable

When you get a hard question, don’t answer immediately. Run this sequence:

Clarify the question (repeat it back in precise terms).

State what is known from the presented evidence (tie to a slide).

State what is not known / not supported (boundary).

Offer the next best action (follow-up, escalation, data source, or method).

That boundary step is where trust is won. It mirrors how oversight bodies operate: what’s observed, what’s interpretable, and what’s escalated (DMC roles, drug safety timelines).

The “off-label drift” rescue script

If the conversation starts moving into off-label territory, don’t scold. Bridge.

Use:

Acknowledge: “I understand why you’re asking.”

Boundary: “I can’t address that directly in this setting.”

Help: “What I can do is…” (provide on-label evidence, discuss mechanism generally, or route to medical info).

This keeps you useful and safe—two qualities that separate high-performing MSLs from anxious presenters.

5) Scientific communication ecosystem: assets, channels, measurement, and career leverage

Great MSL communication isn’t only live presenting. It’s the system around it: pre-reads, follow-ups, documentation, and how you turn field conversations into influence. If you want faster career progression, you must turn presentations into proof assets and network effects. Use the same mindset that top professionals use to surface opportunities through structured communities and directories (networking groups & forums directory, best LinkedIn groups, plus career infrastructure like the clinical research conferences & events directory).

The follow-up package that multiplies your impact

After any strong interaction, send a follow-up that includes:

1-page summary: claim + proof + caveat + implication

Two citations (not ten)

One question that moves the relationship forward

A clear next step (meeting, resource, escalation)

This is how you stop being “nice deck” and start becoming “the person we call.”

Measure what matters (so you can show your value internally)

MSLs often lose influence because they can’t quantify impact beyond “good conversations.” Track:

Repeat interactions with the same KOLs

Follow-up conversions (meeting accepted, referral made, internal action triggered)

Insight quality (is it new, specific, decision-relevant?)

Time-to-resolution for escalations (especially safety/info routing)

If you want a mental model of operational quality, borrow from audit readiness logic: define failure modes, define controls, define evidence (handling audits, inspection readiness for CRAs).

Career leverage: become the person who simplifies complexity

The MSLs who rise fastest aren’t the most charismatic. They’re the ones who:

Can explain design and endpoints cleanly (randomization, endpoints)

Can translate stats into decisions without distortion (biostatistics overview)

Can stay compliant while staying helpful (pharmacovigilance guide)

Can connect field realities to operational execution (documentation, CRFs, oversight) (CRF best practices, DMC roles)

That combination is rare—and it’s exactly what scientific communication excellence signals.

6) FAQs: Scientific communication & presentations for MSLs

-

Stop explaining “what the trial is” and start explaining what the result means for a decision. Use the Claim Ladder: observation → comparison → magnitude → reliability → boundary → implication. Anchor your language in endpoint clarity (primary vs secondary endpoints) and design protections (randomization, blinding).

-

Pre-build boundary phrases and practice them. Most Q&A failures come from answering too fast and drifting into unsupported interpretation. Train yourself to: clarify → answer from evidence → state boundary → give next step. If the question touches safety, use PV-aligned discipline (drug safety timelines, aggregate PV reports).

-

Always ask: “Was the subgroup pre-specified and powered?” If not, treat it as exploratory and label it clearly. Keep your phrasing tied to what was measured and why it matters. If endpoints are being mixed up, reset the room by defining them again (primary vs secondary endpoints).

-

Complex data requires simpler structure, not more text. One slide = one claim; show the figure and one interpretation line; put caveats in the footer. If you need a reference for explaining uncertainty cleanly, keep a stats refresher handy (biostatistics overview).

-

Use a bridge: acknowledge → boundary → helpful alternative. Offer on-label evidence, discuss mechanisms generally, or route to medical information appropriately. Your aim is to avoid “answering anyway,” which is the fastest way to create risk while losing trust. PV and regulatory discipline should be second nature (pharmacovigilance guide).

-

Treat every talk as the start of a relationship loop: deliver clarity, follow up with a 1-page decision summary, and ask a question that earns a next step. Then build visibility through the right communities and events (networking groups & forums, LinkedIn groups directory, conferences & events)—not noisy posting, but high-signal frameworks and playbooks.