Interactive Checklist Generator for Clinical Trial Start-Up Activities

Clinical trial start-up looks simple on paper until ownership blurs, approvals move at different speeds, vendors ask for missing details, and a site cannot activate because one “small” dependency was never cleared. An effective checklist generator fixes that by turning start-up into a visible execution system instead of a pile of disconnected tasks. This guide shows how to build a high-value, role-aware, interactive checklist for study start-up activities so teams can reduce preventable delays, tighten documentation, improve compliance, and move from kickoff to first patient in with fewer surprises.

1. Why an Interactive Checklist Generator Matters in Clinical Trial Start-Up

Clinical trial start-up fails most often when teams mistake a task list for an operational control system. A real checklist generator does more than display steps. It forces sequencing, flags dependencies, assigns owners, captures evidence, and makes it obvious when a start-up milestone is “green” in name only. That distinction matters because start-up is where poor planning creates downstream pain in protocol management, regulatory document handling, GCP compliance, informed consent procedures, and clinical trial amendments.

For sponsors and study teams, the problem is rarely that nobody knows the big steps. Everyone knows a study needs site selection, contracts, budgets, training, essential documents, and vendor readiness. The real issue is that start-up work moves through hidden dependencies. You cannot finalize training until the right version of the protocol is stable. You cannot activate a site safely if pharmacy, lab, investigational product handling, escalation pathways, and source workflows are still fuzzy. You cannot call a site ready when the team is still shaky on adverse event reporting, SAE procedures, protocol deviations, or audit readiness.

That is why a checklist generator should be structured around execution risk, not just chronology. It should connect study design choices like randomization, blinding, endpoint definitions, placebo controls, and sample size assumptions to the operational tasks sites must execute correctly on day one.

A strong generator also creates accountability across roles. It helps the CRA, CRC, principal investigator, project manager, medical monitor, and regulatory specialist see the same start-up reality instead of five different versions of “almost ready.”

2. The 30 Clinical Trial Start-Up Activities Your Generator Should Track

The checklist above becomes powerful only when the generator treats each line as a controlled decision point. That means every item needs five fields at minimum: task name, owner, prerequisite, proof of completion, and escalation trigger. Without those fields, teams start marking tasks complete because a meeting happened, an email was sent, or someone said “we should be fine.” That is exactly how start-up drift begins.

For example, protocol final review should connect directly to downstream assets such as the case report form, clinical trial documentation, site management strategy, monitoring techniques, and patient safety oversight. If the protocol changes but the checklist does not force revalidation of those linked tasks, your generator is giving false confidence.

The same applies to site-facing readiness. A site should not move toward activation until the team has clarity on CRC responsibilities, patient recruitment workflow, informed consent best practices, study documentation standards, and research compliance fundamentals. When those elements are incomplete, the study may launch, but the site will still behave like it is in rehearsal.

A valuable checklist generator should also rank tasks by operational sensitivity. Missing a vendor contact matrix is inconvenient. Missing safety escalation clarity is dangerous. Slow budget negotiation affects timelines. Weak training on drug safety reporting, aggregate reports, signal detection, and risk management plans creates problems that are harder to contain later.

If you want the generator to be genuinely useful, build logic that allows one task to block another. “Training scheduled” should not unlock activation. “Training completed with current protocol version, role-specific attendance, and unresolved questions documented” should. That is the difference between administrative movement and operational readiness.

3. How to Build the Checklist Generator So It Produces Real Readiness, Not Cosmetic Progress

Start by building the generator around milestone gates rather than generic lists. The most practical structure is: study-level start-up, site-level readiness, system readiness, safety readiness, recruitment readiness, and activation readiness. Each gate should have pass-fail criteria. This prevents teams from declaring success because the majority of tasks look done while one high-risk item remains open.

Second, make every checklist item role-aware. A CRA career pathway teaches one set of operational instincts, while a CRC pathway, principal investigator pathway, clinical data manager pathway, and regulatory affairs pathway teaches another. Your generator should show each role what must be done, what must be reviewed, and what must be acknowledged.

Third, require evidence capture. If a task says “EDC ready,” the evidence cannot be a verbal update. It should be active accounts, tested permissions, known workflow instructions, and issue escalation contacts. If a task says “site trained,” evidence should include the protocol version, date, attendee names, unresolved questions, and retraining trigger if amendments occur.

Fourth, add failure-mode prompts inside the generator. Every major task should ask, “What usually goes wrong here?” That one field forces practical thinking. For SIV, common issues include wrong attendees, incomplete pharmacy alignment, lab confusion, weak screening workflow, and vague safety escalation. For consent readiness, common issues include outdated forms, unclear reconsent triggers, weak documentation flow, and staff who understand the words but not the process. Embedding those prompts helps teams think like auditors, monitors, and investigators instead of optimistic project trackers.

Finally, design the generator to support decision-making, not just recordkeeping. The best version will show overdue blockers, high-risk unresolved items, activation-critical tasks, and areas most likely to cause protocol deviations or audit findings. When a tool cannot tell you what will hurt the study next, it is not a start-up control system. It is a prettier spreadsheet.

What is your biggest clinical trial start-up blocker right now?

Choose one. Your answer points to the fastest fix.

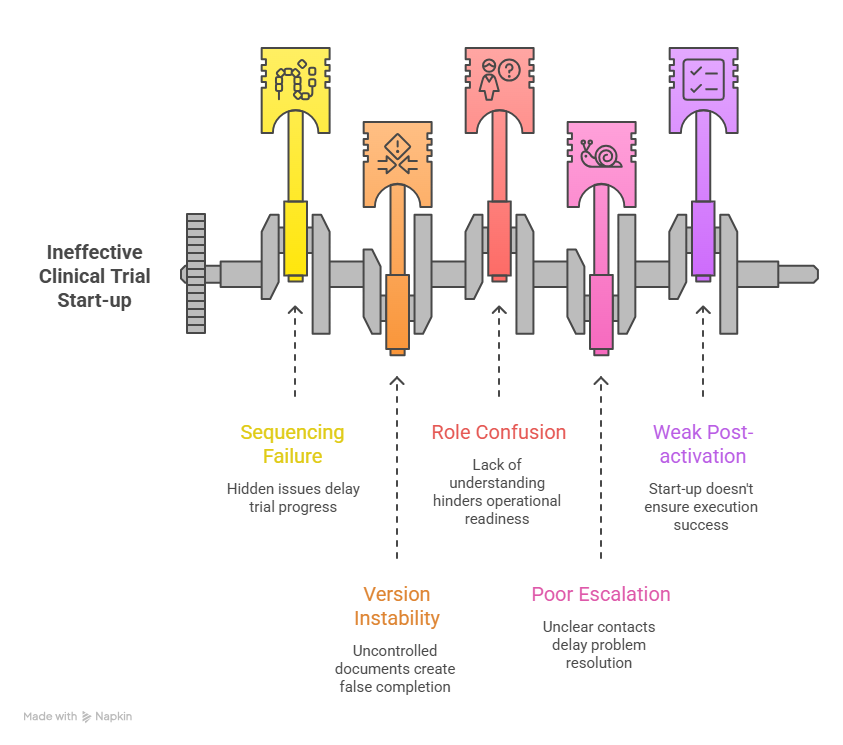

4. The Start-Up Failure Points Your Generator Must Expose Early

A strong start-up tool should make failure visible while there is still time to correct it cheaply. One of the most common problems is sequencing failure. Teams chase visible deliverables like contracts and meetings while underweighting hidden readiness issues like source workflows, investigational product handling, subject scheduling logistics, and escalation clarity. Those hidden issues are what later surface as query volume, consent mistakes, missed windows, and site frustration.

Another major failure point is version instability. When protocol language, consent documents, training decks, or operational manuals are not tightly controlled, the generator starts tracking the illusion of completion instead of real completion. That is why start-up must be linked to protocol development discipline, clinical project planning, resource allocation, vendor management, and stakeholder communication.

The third failure point is role confusion. Sites are often told what must be submitted, but not what must be operationally understood. That gap hurts new teams especially hard. A site might have all signatures in place and still be unready because nobody has fully internalized visit flow, eligibility decision logic, source expectations, screening failure handling, or the right way to route adverse event reviews. Your generator should force role acknowledgments, not just team attendance.

The fourth failure point is poor escalation design. When something goes wrong during start-up, teams lose days figuring out who owns the answer. Is it data management, medical, regulatory, vendor support, or the sponsor lead? A high-value checklist should include escalation contacts, response expectations, and criteria for urgent versus non-urgent issues. That becomes even more important in complex designs involving DMC oversight, IND-linked expectations, or specialized safety workflows tied to pharmacovigilance case processing.

The final failure point is weak post-activation thinking. Start-up should not end at activation. Your generator should include a short early-performance review after first screening and first dosing. That is where teams catch whether start-up translated into actual execution. A site that looked ready but struggles immediately is telling you the checklist measured paperwork better than practice.

5. How CRAs, CRCs, Sponsors, and PIs Should Use the Generator Differently

The same generator should serve different users in different ways. For the CRA, it should function as a monitoring and readiness lens. The CRA needs visibility into document completeness, staff qualification, site workflow realism, SIV gaps, and activation risks. This connects directly to site qualification visits, CRA documentation techniques, GCP essentials for CRAs, investigator site management, and inspection readiness.

For the CRC, the generator should act like a day-one operating map. It should show not only what must be done, but how the site will actually execute screening, consent, visit scheduling, source documentation, safety escalation, and data entry. That is where CRC exam-style thinking, time management skills, protocol management discipline, GCP strategies for coordinators, and regulatory document control all come together in one operational view.

For sponsors and project managers, the generator should reveal cross-functional health. They need to know which sites are truly activation-ready, which vendors are bottlenecking progress, which start-up tasks are repeatedly delayed across the portfolio, and where budget or legal processes are masking clinical risk. That view should align with project manager best practices, budget oversight, clinical operations career thinking, sponsor responsibilities, and vendor execution control.

For principal investigators, the generator should center oversight, safety, and delegation. The PI does not need every operational detail in the same way a coordinator does, but the PI does need clean visibility into qualified staff, delegated responsibilities, protocol-critical procedures, informed consent integrity, and subject safety pathways. That is inseparable from PI ethical responsibilities, adverse event handling, patient safety oversight, team leadership, and protocol oversight.

When one generator can speak clearly to each of these users without flattening their responsibilities into a single generic view, start-up becomes easier to control, easier to audit, and far less likely to break under pressure.

6. FAQs

-

At minimum, it should include task name, owner, prerequisite, due date, proof of completion, version reference, escalation contact, and status logic that can block dependent tasks. It should also distinguish between administrative completion and operational readiness. A study is not ready because a document exists. It is ready when the right people understand and can execute the process tied to that document.

-

The biggest mistake is creating flat lists with no dependency logic. That produces false progress. A better model links critical tasks to operational consequences, especially around informed consent, safety reporting, documentation quality, and site readiness.

-

There is no magic number, but most useful generators track 25 to 40 activation-critical activities at a minimum, then expand by study complexity. The right number depends on design complexity, vendor footprint, country mix, data flows, safety profile, and site burden. The real test is whether the list helps the team predict risk before activation, not whether it looks comprehensive.

-

It should be both. The master engine should be universal so all teams work from one truth, but the interface should be role-based so CRAs, CRCs, PIs, sponsors, and vendors each see the tasks, dependencies, and proofs most relevant to them. That improves accountability without fragmenting the study into separate checklists.

-

A site is truly ready when documentation, training, systems, workflows, safety paths, and staffing all align under the current protocol version. A strong sign of readiness is that the site can walk through screening, consent, visit conduct, data entry, deviation handling, and SAE escalation without guessing. If the team still answers operational questions with “we will figure that out once we start,” the site is not ready.

-

Do not abandon it the day activation email goes out. Keep a short post-activation phase for first-screened, first-enrolled, and early-query review. That is where the team can validate whether start-up planning translated into real execution and update the generator for future sites. The best checklist is not just a tracker. It becomes a learning system that improves every next start-up cycle.