Ranking Top Countries for Clinical Trials (2026 Comprehensive Report)

Choosing the right country for a trial is no longer a “where do we have sites?” decision. It is a risk, speed, recruitment, documentation, and oversight decision that shapes whether a study hits milestones or bleeds months through avoidable rework. Teams that understand the operational differences between markets make better decisions across clinical research associate responsibilities, clinical research coordinator execution, sponsor oversight, GCP compliance, and inspection readiness.

This report ranks countries the way operators think, not the way vanity lists do. It prioritizes startup predictability, enrollment realism, data quality, regulatory clarity, site depth, and cross-functional execution across protocol management, adverse event handling, documentation control, protocol deviations, and patient safety oversight.

1. How To Rank Countries For Clinical Trials In 2026

A serious country ranking cannot be built on raw trial volume alone. High volume can hide long activation cycles, overloaded investigators, weak follow-through on recruitment promises, or fragmented vendor coordination. A country belongs near the top only when it can convert protocol intent into clean execution across site selection and qualification, investigator site management, informed consent procedures, source-facing documentation workflows, and GCP training requirements.

For 2026, the countries that deserve the highest positions are the ones that combine regulatory predictability with execution depth. IQVIA’s 2026 R&D report says trial starts became increasingly concentrated among U.S. sponsors, while Western Europe still held the largest regional share of global trial-country use in 2025. The EU’s Clinical Trials Regulation remains a major harmonization force, the UK reports faster combined review performance, Canada still operates on a 30-day default CTA review, China retains a 60-working-day implied approval pathway, and Australia continues to run under CTN/CTA frameworks with ongoing national clinical-trial reform.

That matters because country choice is really a bundle of decisions: how well teams can execute randomization, preserve blinding integrity, protect primary and secondary endpoints, control case report form quality, and escalate serious adverse events without operational drag. The global registry landscape is also broad enough that “presence” is meaningless on its own; ClinicalTrials.gov says it lists studies in 225 countries and territories, which is exactly why sponsors need a real selection framework instead of a simplistic popularity list.

Below is the practical 25+ country matrix. It is a decision tool, not a beauty contest. Use it the way strong operators use resource allocation plans, vendor governance models, risk management plans, budget oversight, and stakeholder communication frameworks.

2. Top Countries For Clinical Trials In 2026, Ranked

This ranking is a sponsor-operations ranking, not a raw registry leaderboard. It asks a harder question: where can a study move from protocol design to site activation, from patient recruitment to data review, and from safety reporting to audit defense with the fewest preventable breakdowns. EFPIA/IQVIA data support the weight of the U.S., China, Spain, Germany, Japan, and other major markets in commercial trial activity, while regulatory sources explain why the UK, Australia, Canada, and China remain strategically important in 2026.

1. United States

The U.S. stays number one because it combines scale, specialist density, sponsor visibility, and therapeutic breadth better than any single market. It is where many complex studies are easiest to defend across endpoint strategy, DMC governance, monitoring technique, inspection readiness, and medical oversight. Its weakness is not capability. Its weakness is waste. Teams overpay for famous sites that under-recruit, accept vague feasibility answers, and then spend months in rescue mode.

2. China

China ranks second because it is now impossible to treat it as an “optional future market.” It is a core market for scale, oncology momentum, and serious development strategy. IQVIA notes deepening China-linked R&D activity, while EFPIA data show China’s major presence in commercial trial starts and single-country trial share. The upside is obvious. The trap is assuming size automatically creates clean execution. Success depends on disciplined document control, strong protocol deviation management, tight vendor oversight, serious stakeholder communication, and proactive risk planning.

3. Spain

Spain earns a top-three position because it repeatedly performs better than lazy country-selection habits expect. It is a high-value EU market for sponsors who need quality and enrollment discipline without drifting into prestige-site fantasy. Spain is especially strong when studies demand clean collaboration between CRCs, CRAs, PIs, project managers, and medical monitors. The danger is becoming too dependent on a narrow club of well-known hospitals.

4. United Kingdom

The UK ranks fourth because it combines scientific credibility with real regulatory relevance. The combined review model and the UK’s reported speed gains matter in a 2026 environment where startup friction destroys study economics. This is a particularly strong market for translational studies, sophisticated protocol logic, and trial models that demand smart cross-talk between scientific communication, regulatory responsibility, patient safety oversight, clinical operations leadership, and research compliance.

5. Australia

Australia remains one of the best tactical countries in the world when the goal is to move fast. That matters for early phase, proof-of-concept strategies, and studies where startup delay is more dangerous than pure volume limits. Its CTN/CTA structure and reform direction continue to make it highly attractive for sponsors that think in cycle-time terms. But Australia is not a universal solution. Teams that mistake speed for scale can design themselves into recruitment constraints later. Use it the same way smart teams use sample-size planning, resource allocation, budget oversight, protocol amendment control, and site strategy: as a force multiplier, not a magic trick.

6. Germany

Germany still belongs near the top because quality-sensitive studies need countries where the infrastructure is mature and the standards are taken seriously. It rewards teams that arrive prepared. It punishes teams that arrive vague. Strong performance here depends on excellent clinical documentation, exact informed consent execution, robust GCP essentials, well-managed audit preparation, and competent regulatory affairs support.

7. Canada

Canada ranks high because it gives sponsors something scarce: predictability. The 30-day CTA review structure helps, but the real value is execution quality in a North American environment that can support serious oversight. It works especially well when teams need stable alignment across monitoring plans, study documentation, ethics workflows, sponsor governance, and quality assurance functions.

8. South Korea

South Korea is one of the strongest countries for sponsors who want digitally mature hospitals, disciplined data behavior, and strong specialist ecosystems. It is particularly useful when operational excellence matters more than broad country breadth. The common failure here is waiting too long to secure the strongest sites and then pretending lower-tier substitution will produce the same output.

9. India

India belongs in the top 10 because patient-access scale and cost-efficiency are too important to ignore. But India is also where shallow sponsor thinking gets punished fastest. A weak site-selection process creates monitoring pain, documentation drift, and quality inconsistency that no dashboard can fix later. Strong performance depends on hard screening across lab processes, documentation discipline, AE reporting, protocol compliance, and compliance control. CDSCO’s active publication of approvals also shows the market is operationally busy, which is a strength only if governance keeps pace.

10. Japan

Japan rounds out the top 10 because it remains indispensable for certain quality-heavy, specialist, and scientifically serious programs. It is not a country you choose for sloppy speed fantasies. It is a country you choose when the study deserves careful localization, strong hospital systems, and data environments you trust.

3. Which Countries Win By Trial Type, Phase, And Operating Model

The biggest mistake in country ranking is acting as if one top-10 list answers every study. It does not. The right country depends on whether the protocol is enrollment-hungry, endpoint-sensitive, safety-heavy, device-linked, rare-disease focused, or deeply dependent on site behavior. Teams that understand this make better calls across placebo-controlled design, blinding strategy, randomization logic, sample-size assumptions, and endpoint protection.

For early-phase speed, Australia, Belgium, Singapore, the UK, and selected U.S. centers stand out because cycle time matters more than geographic spread. For large-scale enrollment, the U.S., China, India, Brazil, and selected Central and Eastern European countries deserve serious consideration, but only if sponsors can support site management, patient recruitment workflows, source-data quality, regulatory document control, and deviation containment.

For oncology and specialist indications, the U.S., China, Spain, Germany, South Korea, and Japan are usually stronger because they combine specialist hospitals with enough protocol literacy to protect difficult inclusion criteria. For multicountry EU execution, Spain, Germany, France, the Netherlands, Belgium, and Poland often outperform lazier assumptions because they provide a better quality-speed balance under the EU clinical-trial framework, stronger GCP compliance habits, more structured stakeholder communication, clearer sponsor role execution, and better quality-audit readiness.

For decentralized and digitally supported models, the best country is not simply the most technologically advanced one. It is the one where digital workflows can coexist with clean oversight. That means country choice must be tied to patient safety oversight, adverse-event review discipline, clinical documentation standards, monitoring models, and project-planning rigor. The wrong country can make a digital trial feel modern while quietly creating unresolved compliance risk.

4. Why Strong Countries Still Produce Failed Studies

Most failed country strategies do not fail because the country was objectively bad. They fail because the sponsor asked the wrong country to carry the wrong operational burden. A country can be excellent and still become a mistake when the protocol needs intense CRC ownership, deep CRA oversight, strong PI accountability, disciplined adverse-event handling, and active medical monitoring, but the execution plan funds none of those properly.

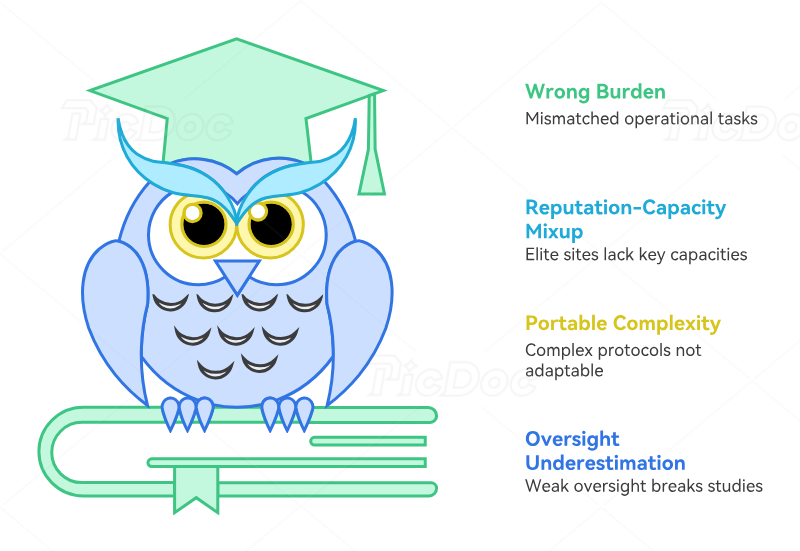

The most common failure mode is confusing site reputation with site capacity. Elite names get chosen, but nobody verifies whether those teams still have coordinator bandwidth, realistic patient access, or disciplined regulatory document management. The second failure mode is pretending protocol complexity is portable. It is not. A study with heavy safety-reporting requirements, frequent CRF pressure, strict endpoint sensitivity, repeated amendment risk, and narrow eligibility logic needs a different country mix than a simpler late-phase program.

Another failure mode is underestimating oversight architecture. Multi-country studies break when sponsors do not define who owns query escalation, safety narrative consistency, document version control, translation governance, vendor accountability, and inspection-defense evidence. That is why country ranking must always be tied to clinical trial auditing, quality assurance careers and functions, clinical compliance leadership, regulatory specialist roles, and project-management discipline. Strong countries reward strong systems. They do not rescue weak ones.

5. How To Use This Ranking If You Are Building Studies Or Building A Career

Sponsors should use this ranking to create a country scorecard before country commitment, not after problems begin. That scorecard should measure startup predictability, site depth, patient access, protocol fit, documentation risk, language burden, oversight intensity, and rescue cost. Teams should pressure-test those scores with staffing-agency insight, job-market signals, conference intelligence, journal trends, and continuing-education sources.

CRAs and CRCs should read this report differently. For them, country rankings reveal where operational excellence will matter most. If a country is rising because sponsors value documentation rigor and site discipline, that creates opportunity for professionals who master GCP compliance essentials, protocol management, adverse-event reporting, study documentation, and inspection readiness. It also shows why shallow “I want to work in clinical research” positioning fails in serious hiring markets.

If you are career-focused, use the ranking to decide where to aim your learning and networking. Countries that attract complex trials reward people who understand the entire operating chain, from CRA pathways and CRC certification expectations to project-manager growth, regulatory careers, and quality-auditor advancement. Then build visibility in the right places through clinical research networking groups and forums, LinkedIn groups for clinical research professionals, clinical research certification providers, and top freelance clinical research directories.

The real lesson of 2026 is simple: top countries are not just large countries. They are countries where the protocol, the sites, the oversight model, and the study team can stay in control at the same time. That is the difference between a study that looks good at kickoff and a study that still looks good at audit.

6. FAQs

-

There is no universal best country for every protocol, but the United States remains the strongest all-around choice because it combines site depth, specialist access, therapeutic breadth, and sponsor familiarity. That said, many studies will get a better outcome by combining the U.S. with countries that improve speed, value, or recruitment fit, such as Australia for early-phase acceleration, Spain for disciplined EU execution, or Canada for predictable review and quality control.

-

No. Volume without execution is a trap. A country can have huge patient pools and still disappoint because the wrong sites were chosen, CRC bandwidth was weak, investigator attention was diluted, or the protocol did not match local workflow realities. This is why patient recruitment mastery, site qualification discipline, documentation quality, and monitoring technique matter more than country hype.

-

For many programs, Australia is one of the strongest tactical choices when startup speed is the core priority. The UK is also increasingly attractive where combined review and ecosystem strength matter. But “fast” only helps if the study can still recruit, retain, and document cleanly afterward. Strong teams pair startup speed with sample-size realism, resource allocation, amendment control, and sponsor governance.

-

The United States, China, Spain, Germany, South Korea, and Japan are especially strong when the protocol needs specialist investigators, tighter inclusion criteria, better hospital infrastructure, and stronger data discipline. These are the studies where endpoint clarity, blinding protection, DMC oversight, and medical-monitor review become decisive.

-

Yes, when the protocol truly fits them and the sponsor is willing to govern them properly. Emerging markets can add patient access, cost leverage, and strategic diversification, but only when teams invest in risk management, vendor control, regulatory documentation, and quality assurance. Cheap countries become expensive when rescue work begins.

-

Build a weighted scorecard that includes startup predictability, enrollment realism, site depth, data quality, AE-reporting burden, language complexity, vendor maturity, and inspection risk. Then stress-test the model against protocol deviations, GCP essentials, audit preparedness, and stakeholder communication plans. If a country scores well only because it is cheap, the model is wrong.

-

The best countries for career growth are usually the countries attracting complex, well-governed studies. That often includes the U.S., UK, Spain, Germany, Canada, Australia, China, and South Korea. Professionals should align that reality with CRA exam preparation, CRC exam readiness, career-roadmap content, and networking groups that surface real opportunities.